When people think about AI automation, they often start with models and prompts. I think a better place to start is the data you already have.

Support emails, issue tracker threads, sales conversations, onboarding notes, internal docs, and CRM history often contain the same thing: repeated patterns, repeated decisions, and repeated explanations. In other words, they contain the playbook.

That data becomes much more useful once you stop treating it as archive material and start treating it as source material for a knowledge base.

The Problem Worth Solving

Most teams do not actually suffer from a lack of information. The problem is that useful context is scattered across too many tools and trapped in messy formats.

If you look at enough historical business data, a few things usually show up very clearly:

- the same patterns appear over and over

- the same decisions keep getting made

- the same exceptions require manual judgment

- the same communication style keeps showing up

That is exactly the kind of material agents can use well, if you prepare it properly.

Do Not Use the Raw Archive Directly

The naive approach is obvious: give the model access to the raw archive and hope it figures things out at runtime.

Sometimes that works. Often it does not.

Raw historical data is noisy. It contains duplicate context, outdated decisions, one-off exceptions, formatting junk, and plenty of material that should not shape future behavior. Even when retrieval is technically working, the model still has to infer what matters and what does not.

That is why I think the better pattern is:

- Extract the stable patterns from the raw data.

- Turn that distilled result into a knowledge base the automation can rely on.

The raw archive is not the product. The distilled operating manual is.

What to Distill

Focus on the pieces that stay useful across many future requests:

- recurring patterns and canonical actions

- decision rules and triage logic

- product limitations and policy boundaries

- preferred tone and phrasing

- escalation paths for ambiguous or risky cases

- rare but important edge cases kept separate from the main flow

If you mix every exception into the main knowledge base, the system gets noisy. If you throw them away, you lose useful operational knowledge. Keep the recurring cases in the main flow and the unusual ones in a separate manual layer.

My Example: Support History

One of my recent use cases was customer support for HealthExport.

Over the years I had accumulated thousands of support emails. With agent help, I used that data to build a private, Git-friendly repository with:

- cleaned and structured Q&A pairs

- synthesized markdown knowledge-base files for recurring topics

- a style guide extracted from historical replies

- a final master prompt for future support automation

The important part is that the system does not rely on raw email search at runtime. The historical data gets compressed into something much smaller and much more stable, and that distilled output powers an automation I built in n8n. If you want to see the starting point for that process, I published the generalized prompt for turning email exports into a Markdown knowledge base as a GitHub Gist.

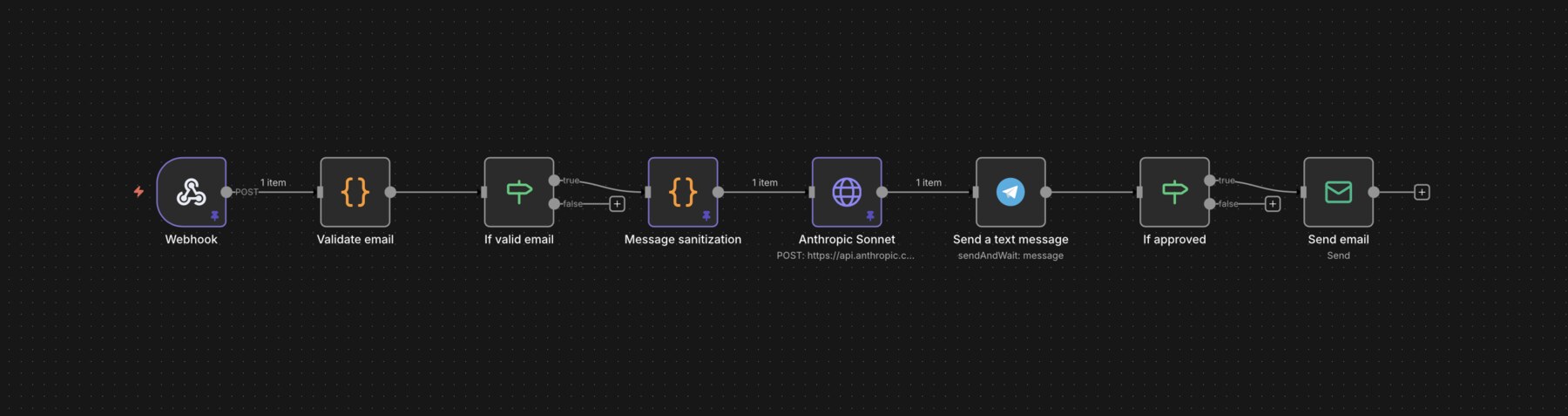

n8n, where the incoming request is checked, enriched with the knowledge base, and turned into a reply draft.In my app, a user can tap a contact support button and send a message along with an email address. The server-side automation checks the submission, and if there is a valid email address, it sends the user message to Claude Sonnet together with the support knowledge base and the master prompt instructions.

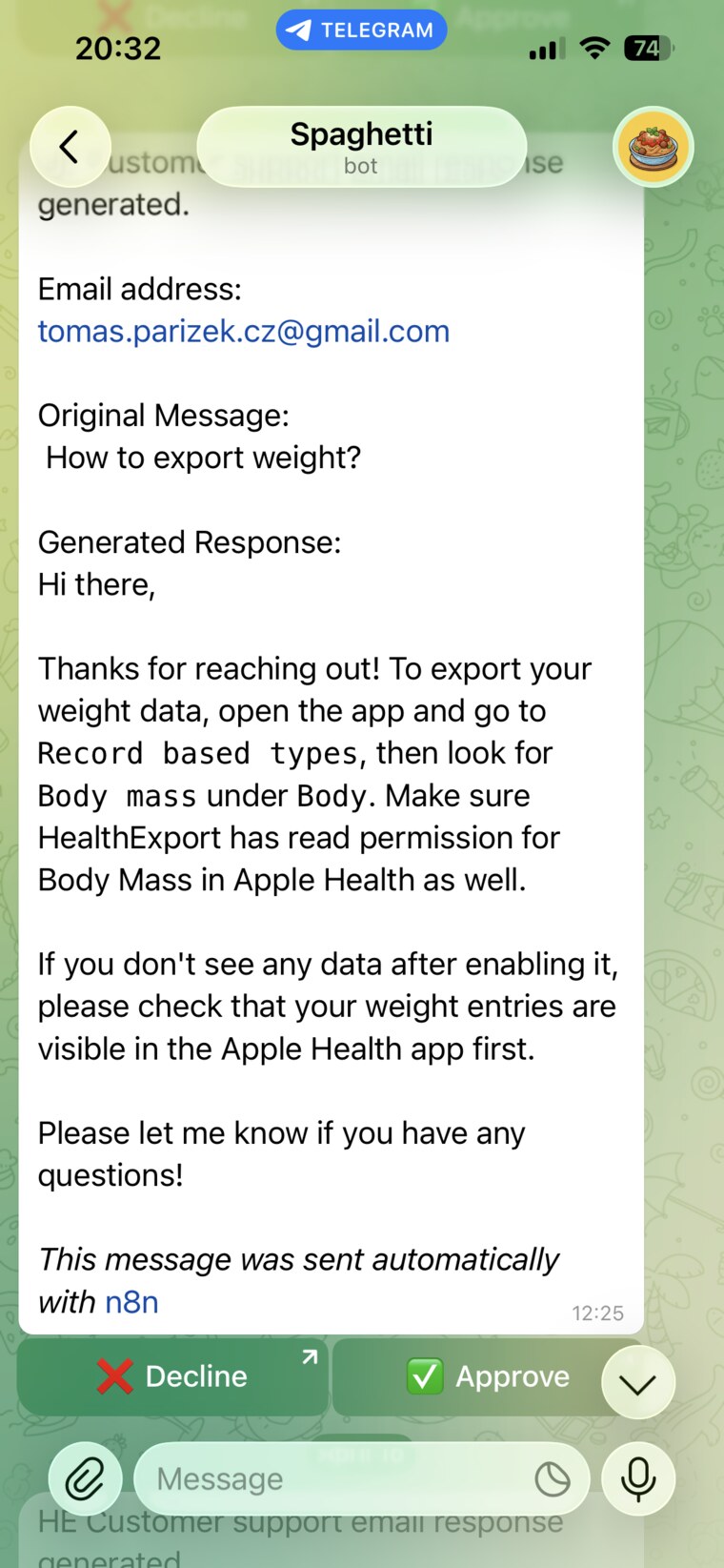

The model writes a reply draft in my usual style, and that draft then goes to Telegram for approval. If I approve it, the email is sent. If I do not like it, I can reject it and handle the case manually.

Why This Pattern Works

What makes this useful is not just that a model is involved. It is that the work is split cleanly: code handles cleanup and structuring, agents handle interpretation and clustering, and the final automation uses a compact knowledge artifact instead of a giant pile of historical examples.

That gives you a few practical advantages:

- lower token cost at runtime

- less prompt noise

- more consistent answers

- easier human review

- better preservation of your actual operating habits

When the output is a small set of markdown files, you can inspect it, edit it, diff it in Git, and improve it over time. That is much easier than trying to reason about a black box filled with years of raw messages.

This Is Bigger Than Support

The same pattern can work with almost any body of operational data that contains repeated decisions or repeated explanations:

- sales conversations

- internal documentation

- issue tracker history

- customer communication

If the data reflects how you repeatedly solve similar problems, it can likely be distilled into something an agent can use.

That is the broader point. Useful automation often starts much earlier than people think. It starts when you realize you already have the context. It is just trapped in messy form.

Takeaways

- The best input for automation is often data you already own.

- Historical communication contains both factual knowledge and behavioral patterns.

- Distilling the archive works better than relying on the raw archive at runtime.

- Approval workflows are a practical way to automate without giving up control.

If you have been thinking about AI automation mainly in terms of prompts and models, it is worth looking at your existing data first. For solo developers and larger teams alike, that is often where the real leverage is.